|

3/1/2024 0 Comments Python decode mat fileLet us look at the above concepts using a simple example. Inserts a backslash escape sequence ( \uNNNN) instead of un-encodable Unicode characters. Replaces all un-encodable Unicode characters with a question mark ( ?) Ignores the un-encodable Unicode from the result. There are various types of errors, some of which are mentioned below: Type of Errorĭefault behavior which raises UnicodeDecodeError on failure.

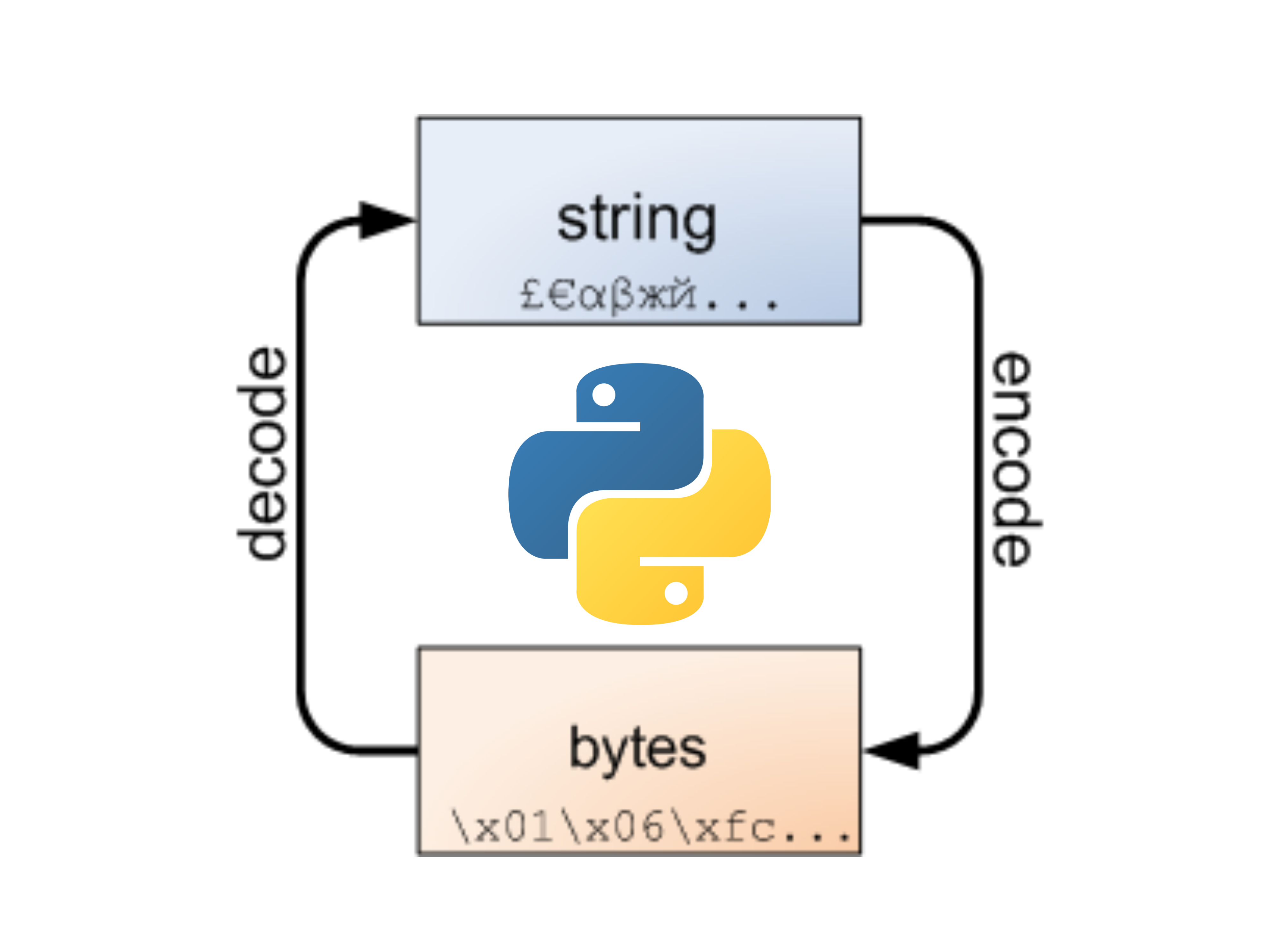

This is actually not human-readable and is only represented as the original string for readability, prefixed with a b, to denote that it is not a string, but a sequence of bytes. This means that the string is converted to a stream of bytes, which is how it is stored on any computer. Although there is not much of a difference, you can observe that the string is prefixed with a b. NOTE: As you can observe, we have encoded the input string in the UTF-8 format. "chan" describes the names of EEG channels.Original string: This is a simple sentence.Įncoded string: b'This is a simple sentence.' If you want to play back the signal through earphones, please apply an HRTF filter.) "leftWav" and "rightWav" contains the aligned audio stimuli of the left and right competing speakers. "eeg": The EEG signals are stored in this field. "data" is the field containing audio and EEG information. As described in the abstract, the directions of the competing speakers are drawn randomly from fifteen alternates. "azimuth" indicates the directions of the two competing speakers.

You can directly extract the audio waveform from the "data" field. "l_audio" and "r_audio" indicate the file name of the left and right competing speakers, respectively.

"left" indicates the subject was instructed to attend to the speaker on the left side. "attended_lr" indicates the relative attended direction (the relative direction of the attended speaker). "expinfo" is a table containing attention information. mat file into MATLAB or Python, you can access the data and experiment information through the "data" and "expinfo" fields. Note that as we manually removed some abnormal parts from the original EEG recordings besides ICA and filtering, the EEG duration of each trial is slightly different. mat files separately, including the EEG signal, attention information, and the competing speakers' information for each trial. The EEG signals were preprocessed in EEGLAB and sliced into trials.ĭata of each subject are stored in. Reading structures (and arrays of structures) is supported, elements are accessed with the same syntax as in. Matlab up to 7.1 mat files created with Matlab up to version 7.1 can be read using the mio module part of scipy.io. The dataset includes EEG signals and audio stimuli. Here are examples of how to read two variables lat and lon from a mat file called 'test.mat'. Note that there are 28 subjects participated in our experiments and the data of seven subjects were removed from further analysis due to device failure. Please contact the author at for additional information. csv files, we’ll import the scipy libary to work with. This dataset provides the preprocessed EEG recordings and the aligned audio stimuli signals, as long as the attention information. Installing Scipy Version: A Key Python Library for MAT-Files Similar to how we use the CSV module to work with.

In each trial, the subject was exposed to a pair of randomly selected stimuli, the directions of whom were randomly drawn from the 15 possible competing speaker directions, i.e., ☑35°, ☑20°, ☙0°, ☖0°, ±45°, ☓0°, ☑5° and 0°. The EEG data were recorded with the 32-channel EMOTIV Epoc Flex Saline system at a sampling rate of 128 Hz (downsampled from 1024 Hz) in a low-reverberant listening room.įor each subject, the experiment includes 32 trials. Unlike previous datasets (such as the KUL dataset), the locations of the two speakers are randomly drawn from fifteen alternatives.Īll subjects have given formal written consent approved by the Nanjing University ethical committee before the experiment and received financial compensation upon completion. This is an auditory attention decoding dataset including EEG recordings of 21 subjects when they were instructed to attend to one of the two competing speakers at two different locations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed